It appears that a company called Vesperix is now offering commercial support for FFTW on ARM presumably based on my work.

I've also been informed some time ago that the Beagleboard project has integrated FFTW-NEON into the Ångström distribution for me. Thanks Koen!

Happy Birthday Beagleboard!

Friday, August 12, 2011

Thursday, August 19, 2010

Final Benchmarks

update-2010-08-20: you can download a consolidated patch of my fftw work (includes all of my changesets) from here. Alternatively, clone my git repository. You can find build instructions here.

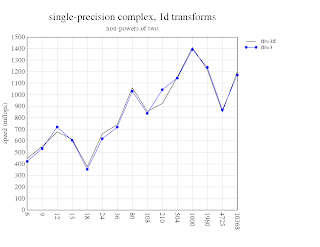

Below are my benchmarks from today. They're really only final in terms of the final ones I'm submitting for GSOC. Yes, you might very well notice that 'fftwff' (i.e. ffmpeg called through fftw) dominates in most cases where power of two transforms are used. However, you should also notice, that fftw provides a nice dispatching mechanism for allowing one to use ffmpeg's blazingly fast transforms in order to compute higher-order transforms with a single api. Pretty nice!

As I already mentioned, these benchmarks do not contain the neon-simd optimized strided memory transfers that I started rather late in the game, but you can probably imagine, that for any composite transform (i.e. if N != m^p, or any transform of higher dimensionality) those memory transfer routines will improve the performance of ALL versions ('fftw3' 'fftwni' and 'fftwn', 'fftw3ff') by a good factor of 2x, at least. After that, once a couple of straight-forward small-prime routines (i.e. 3, 5, 7, 11) are implemented in neon-asm, then the non-power-of-two (npot) performance will begin to approach the power-of-two (pot) performance, which is ideal. That is currently what makes fftw so attractive for x86 architectures - the fact that it provides O(N log N) performance for all lengths, not just powers of two, and without zero-padding (for most transforms of significant length).

Please feel free to check out the raw data on Google Docs (bench-1d-pot, bench-1d-npot, bench-2d-pot, bench-2d-npot, bench-3d-pot, bench-3d-npot). Also, just in case it isn't clear, when 'mflops' is on the y-axis, a higher value is better, and when log10(time(s)) is on the y-axis, a smaller value is better.

Some remarks: ffmpeg does not have api calls for any length that is not a power of two, nor does it have api calls for higher-order (e.g. NxN) transforms, which is why its missing in some of the graphs below. Furthermore, ffmpeg (and most all other libraries) do not support transforms of vector data, and since I added the ffmpeg interface to fftw, it does now :-). In this sense, calling ffmpeg through fftw leverages the excellent planning and dispatching routines of fftw to provide the absolute best possible performance. Eventually, it will be unlikely that anyone would directly used ffmpeg's transforms other than for decoding multimedia - but I think that's best left unchanged. Ffmpeg is likely the best library publicly available for multimedia.

In summary, my improvements to fftw include anything marked 'fftw3n' (neon simd, asm), 'fftw3ni' (neon simd, intrinsics), or 'fftw3ff' (neon simd, ffmpeg). Actually, even those that are marked 'fftw3' are still improved from their original values due to the added cycle counter... and you can count on me adding those missing features once my thesis is submitted ;-)

Wednesday, August 18, 2010

TODO: The Leftovers

The few things that I did not completely finish in time:

- The biggest missing feature by far: optimized, strided (and non-strided) memory copies. You can see where I was going with this. 1D transforms are fairly straight-forward, but 2D and beyond will require a bit of work.

- Further testing of rdft-ffmpeg.c and reodft-ffmpeg.c . I did do some very basic forward transformation tests to ensure that I had the correct output for forward transforms, but not for reverse.

- Ideally, a few extremely fast transforms in neon / asm for small, non-power of two cases. These would compliment the extremely fast power-of-two cases and also make composite transforms incredibly faster (much like on x86).

I'll definitely be tackling those issues after my thesis is handed in. Only 16 more days and then I'm done in academia for the rest of my life!

Monday, August 16, 2010

FFTW: Weekly Report - Week 11, 12, and 13

After much anticipation, here are my very-belated weekly updates, along with another few lovely graphs.

update-20100819(am): I had to restart the benchmark before I left for the lab this morning because the ffmpeg can_do() function in my benchfft source caused a double free error in glibc. I fixed it this morning (removed the out-of-place junk). The benchmarks will be ready by when I get home. Since these are my final benchmarks, I'll copy / paste the timing data into oocalc and finally make time on the y-axis instead of mflops. It's a bit of a pain that benchmarking takes 4-6 hours. Sorry for the delay.

update-20100818: Arg! A bug-ridden, cycle-hungry udevd process was absolutely killing the metrics of my last benchmark. It happens every time I reboot, and I just haven't gotten around to fixing it yet. Usually I remember to kill udevd before doing anything else but I must have accidentally cycled the power on my Beagle last night before starting the benchmark. Below is one graph from the results (which I will replace as soon as the next set of results is finished). You can see several more results of neon performance under heavy load (and its still pretty decent!). Particularly, it's an interesting experience to see how neon just jogs leisurely along while 'top' shows a single process using 95% of the cpu cycles. If you look at the graphs under heavy load, you'll probably also notice how strided and non-strided meory transfers take a beating. I've already restarted the benchmark and should have some new graphs up later tonight.

When I set out to improve FFTW on NEON-enabled ARM processors, the main goal and focus was to be on the TI OMAP 3430, for the BeagleBoard Project and that I did. Originally, the speed increase was rather modest (1.5-2x or so), because I simply implemented the SIMD / codelet API that most architectures use already. However, the NEON coprocessor has no hardware instruction rescheduling (i.e. it is in-order), nor does the Cortex-A8 core, and without those two things (not to mention better scheduling from GCC), FFTW's codelet interface does not perform nearly as well as hand-written assembly code. In contrast, on x86 processors, the benefit of the OOE unit (and likely far better compiler optimizations) is clearly visible, as FFTW outperforms FFMPEG even on my eee701 (shown in the graphs below). The Cortex-A9 does have an out-of-order unit, but its unlikely that this will have a major impact on NEON scheduling.

My most recent BeagleBoard performance comparison included FFMPEG's transform for powers of two, which was faster by far than FFTW using codelets, so I knew that I had some high goals to reach. I originally thought it would be smart to transpose lots of highly optimized assembly into a new non-portable / architecture dependent simd sub folder in FFTW, trying my damndest to get my code to measure up to FFMPEG, but then I realized that was just stupid when there was already a perfectly optimized FFMPEG library right in front of me. Next, I went to lots of extra trouble to create a seperate ffmpeg_fft library which I bundled with FFTW. That was fine, although I did dig up an interesting alignment related bug with respect to how FFTW handles FFTW_FORWARD and FFTW_BACKWARD. Also, I think I may have also dug up another cortex-a8 errata-worthy tidbit, or have found a bug in the Linux kernel's handling of neon instructions... but that's slightly tangential.

Since my last post, I've smoothed over my work, added the beginnings of NEON copy acceleration for buffered / rank-0 transforms, and ran a few tests that you can check-out here (here) and here. The results might be surprising even for the non-power-of-two cases. I was surprised at first, myself. Originally, I was curious as to how many of the non-power-of-two / prime algorithms worked. After some perusal of related articles on IEEEXplore, I learned that many of the non-power-of-two algorithms (i.e. Bluestein, Rader) rely on some form of recursion or composite solution where power-of-two DFT's (or other small, prime factors) are often key, which why non-power-of-two performance was slightly increased with NEON SIMD codelets and FFMPEG routines. In the non-power-of-two case, typically what would happen is that . I found Rader's algorithm to be particularly interesting; these days its treated as common knowledge in most advanced DSP courses. Non-power-of-two routines are still not as fast as ffmpeg's power-of-two algorithms, but it might be possible to improve that with a bit more work on NEON copy acceleration.

A few technical details:

So the bottom line, is that FFMPEG's blazingly fast power-of-two transforms are a benefit to all of FFTW's algorithms including those for prime and non-power-of-two input length. However, such composite transforms rely heavily on efficient strided memory copy algorithms (rank-0 transforms). Since I only just started chipping away at that (for NEON), the performance for non-power-of-two transforms is still less than ideal. Also, I should note, that any higher-order transform (e.g. for images or volumes) also requires highly efficient rank-0 transforms. Hence, none of my graphs are showing higher-order throughput results.

There is one more thing worth noting. Rather than using a bundled ffmpeg_fft library inside FFTW (as I initially did for testing), I decided to take the simple and obvious approach. That is, I chose to link to FFMPEG from within the FFTW code dynamically (statically linking is also possible). Why?

In the most ideal case, genfft would have been optimized to directly output optimized ARM / NEON machine code, much like a JIT compiler, but that would probably take much more time than was available. At this point, I think I can finally say that I'm happy with FFTW's throughput results (and the potential for improvement with acclerated NEON rank-0 transforms) even through the 'brute force' methods that I chose. That being said, although the code is more-or-less finished and more-or-less bug-free, the fftw-neon project still. I will probably only have time to do some instructional documentation, and a demonstration screencast, but it shouldn't be too hard to put together a bitbake recipe at a later date.

update-20100819(am): I had to restart the benchmark before I left for the lab this morning because the ffmpeg can_do() function in my benchfft source caused a double free error in glibc. I fixed it this morning (removed the out-of-place junk). The benchmarks will be ready by when I get home. Since these are my final benchmarks, I'll copy / paste the timing data into oocalc and finally make time on the y-axis instead of mflops. It's a bit of a pain that benchmarking takes 4-6 hours. Sorry for the delay.

update-20100818: Arg! A bug-ridden, cycle-hungry udevd process was absolutely killing the metrics of my last benchmark. It happens every time I reboot, and I just haven't gotten around to fixing it yet. Usually I remember to kill udevd before doing anything else but I must have accidentally cycled the power on my Beagle last night before starting the benchmark. Below is one graph from the results (which I will replace as soon as the next set of results is finished). You can see several more results of neon performance under heavy load (and its still pretty decent!). Particularly, it's an interesting experience to see how neon just jogs leisurely along while 'top' shows a single process using 95% of the cpu cycles. If you look at the graphs under heavy load, you'll probably also notice how strided and non-strided meory transfers take a beating. I've already restarted the benchmark and should have some new graphs up later tonight.

When I set out to improve FFTW on NEON-enabled ARM processors, the main goal and focus was to be on the TI OMAP 3430, for the BeagleBoard Project and that I did. Originally, the speed increase was rather modest (1.5-2x or so), because I simply implemented the SIMD / codelet API that most architectures use already. However, the NEON coprocessor has no hardware instruction rescheduling (i.e. it is in-order), nor does the Cortex-A8 core, and without those two things (not to mention better scheduling from GCC), FFTW's codelet interface does not perform nearly as well as hand-written assembly code. In contrast, on x86 processors, the benefit of the OOE unit (and likely far better compiler optimizations) is clearly visible, as FFTW outperforms FFMPEG even on my eee701 (shown in the graphs below). The Cortex-A9 does have an out-of-order unit, but its unlikely that this will have a major impact on NEON scheduling.

My most recent BeagleBoard performance comparison included FFMPEG's transform for powers of two, which was faster by far than FFTW using codelets, so I knew that I had some high goals to reach. I originally thought it would be smart to transpose lots of highly optimized assembly into a new non-portable / architecture dependent simd sub folder in FFTW, trying my damndest to get my code to measure up to FFMPEG, but then I realized that was just stupid when there was already a perfectly optimized FFMPEG library right in front of me. Next, I went to lots of extra trouble to create a seperate ffmpeg_fft library which I bundled with FFTW. That was fine, although I did dig up an interesting alignment related bug with respect to how FFTW handles FFTW_FORWARD and FFTW_BACKWARD. Also, I think I may have also dug up another cortex-a8 errata-worthy tidbit, or have found a bug in the Linux kernel's handling of neon instructions... but that's slightly tangential.

Since my last post, I've smoothed over my work, added the beginnings of NEON copy acceleration for buffered / rank-0 transforms, and ran a few tests that you can check-out here (here) and here. The results might be surprising even for the non-power-of-two cases. I was surprised at first, myself. Originally, I was curious as to how many of the non-power-of-two / prime algorithms worked. After some perusal of related articles on IEEEXplore, I learned that many of the non-power-of-two algorithms (i.e. Bluestein, Rader) rely on some form of recursion or composite solution where power-of-two DFT's (or other small, prime factors) are often key, which why non-power-of-two performance was slightly increased with NEON SIMD codelets and FFMPEG routines. In the non-power-of-two case, typically what would happen is that . I found Rader's algorithm to be particularly interesting; these days its treated as common knowledge in most advanced DSP courses. Non-power-of-two routines are still not as fast as ffmpeg's power-of-two algorithms, but it might be possible to improve that with a bit more work on NEON copy acceleration.

A few technical details:

- Not only the DFT, but also the RDFT, DCT, and DST interfaces to FFMPEG were brought out into FFTW, which gives much more flexibility for varying applications.

- Currently the FFMPEG interfaces stick with the in-place, contiguous, and aligned API of FFMPEG. That is, any non-aligned, or composite transforms require buffering before feeding them into FFMPEG. In FFTW terms, changing strided data into a non-strided data is called a rank-0 transform. Rank-0 transforms always occur for composite DFTs, and thus, require a very efficient set of copying routines.

- The ARMv7 cycle timer made a major difference to performance, since simple estimation methods were not being used to choose an algorithm. I would highly suggest that people apply Mans' USER_PMON patch and recompile their kenel before trying out my changes.

- Since FFTW's interface to FFMPEG is generic, the native library may or may not actually use native SIMD instructions. As we all know, misalignment with SIMD instructions are bad, so it's necessary to ensure that input data is properly aligned. Currently FFMPEG uses a constant 16-byte alignment, which is straight forward to account for, but FFTW does a clever little swap of real and imaginary input pointers to indicate an IDFT (i.e. data is no longer aligned). After some time, I found a bit of trickery to get around that, which I will document further on elinux.org.

- Lastly, if you choose to link with FFMPEG, please ensure that you have --enable-hardcoded-tables selected. Otherwise, FFMPEG (current git) will cause a segmentation fault (in cos table initialization). That is an FFMPEG related bug that I was unlucky enough to encounter during my testing phase.

Having a cycle counter means that FFTW will no longer be restricted to running in ESTIMATE mode on ARMv7, which will allow it to accurately time several different strategies, and then store wisdom files to speed-up future calculations.

So the bottom line, is that FFMPEG's blazingly fast power-of-two transforms are a benefit to all of FFTW's algorithms including those for prime and non-power-of-two input length. However, such composite transforms rely heavily on efficient strided memory copy algorithms (rank-0 transforms). Since I only just started chipping away at that (for NEON), the performance for non-power-of-two transforms is still less than ideal. Also, I should note, that any higher-order transform (e.g. for images or volumes) also requires highly efficient rank-0 transforms. Hence, none of my graphs are showing higher-order throughput results.

There is one more thing worth noting. Rather than using a bundled ffmpeg_fft library inside FFTW (as I initially did for testing), I decided to take the simple and obvious approach. That is, I chose to link to FFMPEG from within the FFTW code dynamically (statically linking is also possible). Why?

- maintenance becomes much easier,

- bundled libraries are inherently evil, and

- my contribution then becomes architecture agnostic.

In the most ideal case, genfft would have been optimized to directly output optimized ARM / NEON machine code, much like a JIT compiler, but that would probably take much more time than was available. At this point, I think I can finally say that I'm happy with FFTW's throughput results (and the potential for improvement with acclerated NEON rank-0 transforms) even through the 'brute force' methods that I chose. That being said, although the code is more-or-less finished and more-or-less bug-free, the fftw-neon project still. I will probably only have time to do some instructional documentation, and a demonstration screencast, but it shouldn't be too hard to put together a bitbake recipe at a later date.

Although I thoroughly enjoyed working on this project and did put a lot of time into it (some of which really should have been dedicated to my MSc thesis), much of the credit should be attributed to the authors of FFMPEG and FFTW. I also would like to thank the people who offered me advice and encouragement - specifically my mentor Mans, Matteo from the FFTW project, as well as Jason and Koen from #beagle. It has definitely been a good experience working on FFTW, and I feel inclined to do some further optimizations on it in the future. Specifically, that would include NEON-accelerated rank-0 transforms and perhaps some small-prime algorithms in NEON assembler (e.g. for N=3, 5, 7).

Please drop me a line if you find this work useful - I always love to hear how I've helped others out. Also, within the next few days, please feel free to visit my project page on elinux.org for documentation, instructions, demonstrations, and more. In case anyone is interested, you could probably also anticipate seeing the results of this project ending up in a certain free version of a popular MAtrix maTh LibrAry, Built for mobile devices (i.e. GNU Octave).

Labels:

benchfft,

benchmarks,

ffmpeg,

fftw,

intrinsics,

neon

Monday, August 9, 2010

Meanwhile

I'm waiting for another benchmark to finish to publish my (rather lengthy) weekly update. It should be done within the next hour. Meanwhile, here's a small sample of many more graphs to come.

update-20100812 (am): right before I was about to publish the rest of the graphs, I ran into a really strange (probably alignment-related) bug that was causing fftwff to stop at N=32. I've sinced fixed the bug, but also realized that I completely neglected optimized memory copies! FFTW terms these as 'rank-0' transforms and you can probably imagine that they're absolutely essential for pretty much everything. This morning I identified the appropriate files to fix, and will get to it tonight after spending the rest of the day on my thesis. For the impatient, I gracefully refer you to my git repository.

update-20100814 (pm): ... found the bug... oddly enough it was not due to misalignment. libavcodec/fft.c:75 in ff_init_ff_cos_tabs(). I rebuilt ffmpeg it with hardcoded tables.

update-20100816 (am): i've since fixed several other bugs to do with alignment and vector strides, added the beginnings of memory copy acceleration (for rank-0 transforms and buffered operations), and am (yet again) generating lots of lovely graphs.

Tuesday, July 27, 2010

FFTW: Weekly Report #10

After getting an extension on my thesis last week, I've been able to get back into FFTW a bit more, so I started going after the main thing on my agenda; adding non-portable, architecture-specific, neon-enabled fft routines that do NOT use codelets (gitorious). I decided to add these routines in dft/simd/nonportable for lack of a better destination.

The first step was to determine how to expose a new fft algorithm / implementation to fftw's planner. Taking a look at an example like dft/bluestein.c, it was clear that each algorithm / implementation would use a function of the form X(dft_NAME_register) to register with the planner, and that each of the functions to register would need to appear in a const solvtab structure. In dft/conf.c each of the solver tables (standard & simd) are added to the list of possible solvers via X(dft_conf_standard).

In dft/simd/nonportable, a subdirectory for each architecture (in my case 'arm') should be defined, and added to dft/simd/nonportable/codlist.c (if supported).

If the ESTIMATE flag is passed to the fftw planner, then the chosen algorithm / implementation will be based on the number of additions, multiplications, and other operations as reported by all available algorithms and implementations. If the PATIENT flag is passed to the planner, then most available algorithms and implementations will be timed (now I definitely need to fix the armv7a timer code), and the one with the shortest time will be selected. I don't yet know of a way to specifically select which algorithm to use via the advanced or guru interfaces although that useful feature might deliberately not even be available in fftw.

So, now that I have the groundwork laid out, I can go ahead and splice some of the ffmpeg power-of-two fft code into fftw in dft/simd/nonportable/arm. I'll try to get that done within the next day or two. All that's necessary is a bit of a bridge to join the two apis. After that I will begin the interesting journey to see if I can improve the various non-power-of-two algorithms with some elegently crafted assembler code.

The first step was to determine how to expose a new fft algorithm / implementation to fftw's planner. Taking a look at an example like dft/bluestein.c, it was clear that each algorithm / implementation would use a function of the form X(dft_NAME_register) to register with the planner, and that each of the functions to register would need to appear in a const solvtab structure. In dft/conf.c each of the solver tables (standard & simd) are added to the list of possible solvers via X(dft_conf_standard).

In dft/simd/nonportable, a subdirectory for each architecture (in my case 'arm') should be defined, and added to dft/simd/nonportable/codlist.c (if supported).

If the ESTIMATE flag is passed to the fftw planner, then the chosen algorithm / implementation will be based on the number of additions, multiplications, and other operations as reported by all available algorithms and implementations. If the PATIENT flag is passed to the planner, then most available algorithms and implementations will be timed (now I definitely need to fix the armv7a timer code), and the one with the shortest time will be selected. I don't yet know of a way to specifically select which algorithm to use via the advanced or guru interfaces although that useful feature might deliberately not even be available in fftw.

So, now that I have the groundwork laid out, I can go ahead and splice some of the ffmpeg power-of-two fft code into fftw in dft/simd/nonportable/arm. I'll try to get that done within the next day or two. All that's necessary is a bit of a bridge to join the two apis. After that I will begin the interesting journey to see if I can improve the various non-power-of-two algorithms with some elegently crafted assembler code.

Monday, July 19, 2010

FFTW: Weekly Report #9

Hi everyone,

There was not a lot of activity this week, mainly due to playing catch-up on my thesis.

Highlights: Misc Repository

- ffmpeg's fft was seperated out of ffmpeg into its own small library (<39kB)

- ffmpeg's fft is integrated with benchfft, for easy visualization

Todos:

* fix real / reverse portions of ffmpeg's benchmark in benchfft

* fix benchfft's graphing features to display runtime rather than mflops on the y-axis.

Please see my last post for an initial graph of ffmpeg's fft vs fftw with neon, as well as details about my plans with fftw.

Subscribe to:

Posts (Atom)